‘Democratization’ can be a dirty word. Some folks hear it and conjure up long conference calls or work meetings abuzz with words like synchronicity and alignment. On the much more positive side, however, democratization is a good thing—a resource that is useful becomes more readily available to the masses.

When it comes to artificial intelligence (AI) and enterprise IT, democratization offers a bit of both the positive and the negative. Let’s take a look.

What does it mean to democratize AI?

In a democratic society, all people are represented. Similarly, when something is democratized, all people can access it. Democratization is the idea that everyone gets the opportunities and benefits of a particular resource. In this case, it’s artificial intelligence. If the pinnacle of 20th century technology was getting tech into the hands of more people, the future of technology in the 21st century is intelligence.

When it comes to enterprise IT, democratizing AI means making intelligence accessible for every organization, perhaps even for every person within the organization. The future of AI is using hardware and software to:

- See and hear patterns

- Make predictions

- Learn and improve

- Take specific actions with this intelligence

Tech enthusiasts (optimists?) see AI as a tool for good, that can help every person achieve more and achieve better.

(Compare AI & machine learning.)

The availability of AI

What is the state of AI today? Artificial intelligence is in use in some workplaces. Often small tech-savvy startups and large firms with lots of capital, like technology and finance businesses, are deploying fairly sophisticated forms of artificial intelligence:

- Machine learning is able to notice fraudulent activities or help better plan business decisions.

- Natural language processing is improving with each new virtual assistant out there.

But lots of other companies are behind. They may not know how to start using AI and they may not have the resources to create their own AI. Cloud technologies are filling this gap, with options from Google, AWS, Microsoft, and plenty of other vendors, companies can begin exploring how AI can help them. AI as a service (AIaaS) is one low-barrier entry to AI.)

Aside from AI feeling complicated or overwhelming, it can be downright expensive for many companies, especially when wading into the in-demand field of data scientists and data analysts.

With big tech firms way ahead of the curve, the industry ponders whether other enterprises (SMBs, non-tech firms, etc.) can really benefit from AI, let alone catch up. After all, it is shown that, at least so far, the more data a company has, the more intelligence it obtains. To avoid this, some tech firms are spending intentionally, with Microsoft and Nvidia specifically investing in AI-driven startups.

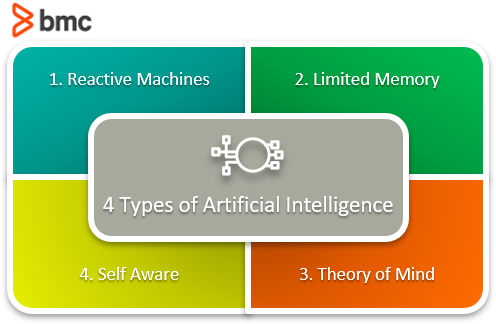

(Learn about the four types of AI.)

The pros of democratized AI

Before we get too real-worldy, let’s explore the positive potential for AI. The more that AI becomes accessible, the more companies—and users—can take advantage of it. This means that AI will go far beyond the major tech companies and we’ll see a broad diversity of companies and government agencies employing AI and changing their strategy and operations accordingly.

AIs today mostly aim to improve business and bottom line stats, though cloud suites, virtual assistants that rely on deep neural networks and natural language processing, and more.

But that’s just the tip of the iceberg.

Other potential for AI means using it in ways we may not normally consider. Beyond enterprise IT, artificial intelligence initiative take aim at a lot of societal or worldwide woes:

- Fighting climate change

- Tracking policework to avoid unfair targeting

- Developing standards in healthcare AI that narrow the vast disproportion between men’s and women’s health

Most companies who successfully deploy AI are investing heavily on the tech side and paying a pretty penny for top data specialists in an economy that’s severely lacking in data scientists and data analysts. But cloud technologies are making data, and the intelligence that results from it, much more affordable.

The cheaper cloud tech gets, more AI tools can exist, and choice means affordability. Soon, more and more companies can spend less to reap the same benefits of intelligence. And as the technology becomes more accessible, more people can specialize in it, decreasing salary costs to companies for these data-specific jobs. Colleges and universities will expand their AI offerings, minimizing the skills gap within as little as a decade.

But, Gartner predicts that many of these tools will be automated, offering a level of self-service that AI doesn’t yet have. This self-service is good for enterprises but perhaps not-so-good for those data specialists who specifically trained in AI.

The drawbacks of democratized AI

Immediately apparent when data analysts and data scientists are displaced? Data quality is uncertain, to say the least. When relying on a combination of entry-level AI specialists and automated or self-service AI tools, as could be the near future, companies may be relying on data that is poor.

On a larger level, C-level executives might struggle to understand AIs capabilities and imagine its potential. Though they may be eager to employ it to stay competitive, they may not know how to harness intelligence productively. And if highly-skilled data scientists and data analysts are no longer “necessary” from an enterprise’s perspective, intelligence can be used in many wrong ways. After all, if data can’t make an immediate impact, what’s the point?

(Consider the ethics of using data for business.)

Results from poor data can ripple across the business, with unanticipated outcomes not evident until it’s too late. The bottom line, maybe, is that the role of data scientists may shift from specific data tasks to a role of oversight and assurance, which may prove to be a critical and worthy investment.

This move away from specialized roles—indeed, the fear of AI taking over jobs—is real. According to PwC, up to 40% of current jobs could disappear or shift drastically by 2030. This is not a reason to panic. Though jobs may shift or go by the wayside, new jobs will bubble up, much like the role of the new data scientist.

This may also consider companies to rethink how they can better harness their employees’ skills and interests, whether by training for new skills or creating different jobs. After all, a key piece of artificial intelligence is that it can often only automate tasks that are repeatable and predictable, not more nuanced.

Another problem we may face as AI democratizes is the looming awareness that bureaucratic companies and most employees don’t have the power to act quickly. A recent study suggests that with this intelligence, decision-making authority must also democratize, from leadership positions to nearly every employee within a company. This will be the only way that intelligence can actually make a difference—if it is applied at the right time, which often arrives faster than a board can enact a decision.

Airbnb is embracing data transparency by making all data accessible for all employees, a way to better arm their staff to think wider when deciding. Similarly, Unilever has an in-house AI data platform that removes departmental silos, so all employees can:

- Better trust their data

- Reduce the time needed to make informed decisions

(See how AI can augment the work humans already do.)

A good example is healthcare

Healthcare is often cited as an area that AI can help immensely. Tech enthusiasts and healthcare professionals alike have long embraced the idea that technology can help improve healthcare, both in countries that lack public health options and in Western societies where healthcare, whether private or nationalized, suffers from:

- Costs

- Wait times

- Bureaucracy

Plenty of studies and real-world case uses show that AI is helping doctors and other medical pros diagnose patient issues. Indeed, AI can provide super-powered intel that would otherwise take a medical team hours or days to research or troubleshoot. But information alone doesn’t solve all of our problems, healthcare or otherwise: in bureaucratic institutions like private hospitals or government health clinics, intelligence can’t speed up the time it takes to make an informed decision.

Technology in healthcare can also fall short: healthcare isn’t merely about diagnoses, after all. Instead, a strong, well-balanced medical professional needs empathy and an ability to develop an appropriate treatment plan with a holistic approach. (See the recent case of a doctor who told a patient he was dying via live video.)

Importantly, AI relies on vast amounts of quality data. But we have known for a long time that health data skews significantly toward white males, so our data about women or persons of color is severely limited. How, then, can we trust the decisions AI makes when the data is not exactly robust? Such data, though it is real, can perpetuate social and economic bias that already exists in healthcare. Perhaps a neutral AI isn’t always the right answer.

As positive potential and realistic fears about AI abound—what about the black box? Are we relying too heavily on intel?—we must remember that AI should be a tool for us, not humans as a tool for AI. Large and small companies alike will have to deal with issues like whether to make AI accessible for all employees and promoting effective, front-line decision making in order to stay responsive and agile to the ever-changing market.

But companies will increasingly bump up against the ideas of what they owe to the world in terms of AI output. Creating technologies may not be enough. We’ll all have to consider efficiencies, accuracy, and, most importantly, whether AI is equitable downstream.