Artificial AI is fake AI. That is: it is not actual artificial intelligence.

A real AI will use a machine learning model to predict a result. An artificial intelligence AI might look like AI, but it’s just a person on the other end responding to requests.

Artificial AI is usually associated with companies using third parties and low-wage labor to simulate artificial intelligent features. Often, an outside service like Mechanical Turk is used.

Where there is great value, there are great frauds.

The value of true AI

AI is a revolutionary technology capable of eliminating the need for teams of people to calculate a single decision. Its value allows complex calculations to be made routinely in many unique situations.

But, its value has attracted many frauds.

For some, it’s worthwhile to adopt fake AI just to make a quick buck through the attention it attracts. Because true Artificial Intelligence is loosely defined (or, more so, not well understood), the term AI gets pushed by marketing because it attracts more investors, employees, and customers.

(Explore the four types of AI and mindful AI practices.)

Companies using the term appear to be more tech-savvy. The .AI domain name is a go-to for startups who want to show off their skills; they only need to have the name, with no proof of the technology.

However, anybody working with AI knows that building an AI is complicated. To achieve true AI requires:

- The know-how

- The data

- The computational resources

Lots of money is spent just to build a single model. Then, more money is spent in R&D to build models that, unfortunately, never end up working because:

- The problem scope was too wide.

- There was insufficient data on which to train the model.

When a dishonest company realizes they don’t have the skills to make it happen themselves, they turn to the next best option: Simulate AI, and don’t tell anyone.

Data annotation is a real problem

The machine learning (ML) world has a real data problem. In order to have good, working AI, the data has to be there.

What these fake AI companies are doing is outsourcing its data annotation. Whether that is making an excuse for them depends on if they:

- Actual have third parties annotating data as part of their ML pipeline.

- Are just listening to and interpreting a model.

For example, human in the loop machine learning is a real AI methodology. It acknowledges the data problem, and directly feeds its annotations back into the machine learning model to train on good, live, labeled data. Over time, it becomes a well-oiled loop.

So, what’s the difference between this real ML and some fake AI companies? The use of human annotators.

Usually, in true AI, the human’s labelled data fits right into the Machine Learning model lifecycle. On the other side, though, the fake AI companies will only use human labor to fulfil the task, and the data isn’t fed into a ML pipeline. The data sometimes isn’t even collected at all.

(Compare ML hype to reality.)

Examples of artificial AI

In one case, a San Francisco-based company has people marking points on a map for delivery robots to reach their destination. The robot uses AI to avoid anything directly in its path, but it relies on Colombian workers to regularly mark short paths on a map for the robot to travel.

“The Kiwibots do not figure out their own routes. Instead, people in Colombia, the home country of Chavez and his two co-founders, plot “waypoints” for the bots to follow, sending them instructions every five to 10 seconds on where to go.”

In another case, a Chinese company provided a voice to text translation on the spot, but used teams of annotators on the backend to listen to the audio and type it out.

The delivery bots may not be doing anything ethically wrong. I don’t believe they were selling the waypoint portion as AI, whereas the live transcription group was selling its platform and raising investor money on the basis that its technology was powered by AI—and it wasn’t.

The delivery bots, at first glance, might be collecting data their waypoint data and pushing it through an ML pipeline, so eventually the ML model can make accurate predictions on how to get to its destination. (Normal map technologies use roadways. These robots probably need a database of sidewalks, cross walks, and pedestrian bridges to travel.)

The real difficulty when looking at a company is to discern what choices in the company are AI and not.

In an unknown field, it is possible for the Kiwibots guys to slap an AI label onto its product. Then, it is up to anyone placing a valuation on that company to dig deeper and discern that parts of its organization are run by AI and not others. The valuation needs to consider:

- What is the value of each AI product within the company?

- If the company is faking AI yet claims to have AI, do they have the ability to convert the data the they’re actively annotating into a real ML model, or are its efforts sent to waste?

Regardless of fake or real, the path to creating a real machine learning model for an AI to use requires quality data. The data needs to be tagged, whether it’s by an outsourced group, employees at the company, or the users themselves

Finally, whether the “AI” is real or fake depends on whether this tagged data is pushed into an actual ML model, and whether that model is giving the responses—or if the annotators are responding instead.

AI vs AAI

So, let’s sum up these key differences between AI and AAI.

Real AI

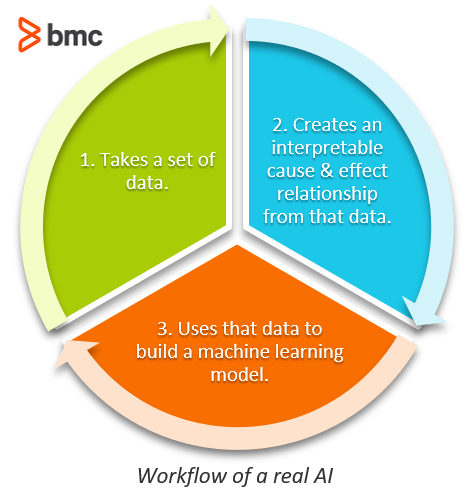

Real AI uses statistics to make a prediction, given some data inputs. At its core, real AI follows this workflow:

- Takes a set of data.

- Creates an interpretable cause and effect relationship from that data.

- Uses that data to build a machine learning model.

If the whole process is done well, the resulting model can then make accurate predictions around the defined cause and effect relationship. Each one of these steps is incredibly important, and the result of all of them is creating a model that can make predictions on the given problem pace without the human having to get involved.

The costs to build it include knowledge of the process and computational resources. The dataset can be costly to get, too.

Fake AI

Fake AIs, however, dismiss all of this engineering, and hire low-wage labor to do the work. Fake AIs are common in these industries and services:

- Chat rooms

- Virtual assistants

- Transcription services (detect object in image, or words in voice)

A chatbot can be hard to build. Human conversations can go any which direction. For a chatbot to work, the parameters around the dialog must be incredibly well-defined. Chatbots are probably one of the most widely adopted fake AIs. That’s because it is much easier to have a third party answer a number of requests than to it is to actually build the UI and models.

Often, people would rather talk with a person anyways.

Benefits of real AI

When you use real AI, you get these benefits that fake AI just can’t touch:

- Ability to scale decisions. If a consultancy firm wanted to serve more customers, they’d have to hire more consultants. An AI could perform its same analysis on the same problem over and over, no new hires needed.

- More dynamic offerings by a company. A company can use AI to make the data it presents more diverse, filter through more data, present important data, and cater more specifically to wider segments of the market.

- Operate around the clock & on-demand. People are expensive. Maintaining a workforce of people requires needing a human resources crew, bathrooms, break times, paying supply and demand costs for people to work around the clock, and navigating all the nuanced social games people play with one another when at work. Using an AI can avoid these problems. If there is a surge of callers, on Kubernetes for instance, the user’s chat session can just be opened on another computer instance.

The harm of artificial AI

So, what’s it to you? If you still receive a service you are happy with, even though it is not real AI, who cares? For most people, the likely answer is: very little.

The cost of fake AI is probably situational and depends on what your expectations are from the AI. Are people saying their AI will cure cancer? Possibly. It hasn’t stopped fake doctors from scamming a dollar from a sick person.

Depending on the arrangement, the harm of adopting a fake AI can be:

The long-term value you receive is likely smaller. If these companies take the easy way out in the short run, they are likely making a quick buck that won’t last long-term. Whatever service you are receiving may have to switch in the future. If you needed the service, and others did too, then, even if it failed, someone else will probably come in and fill the demand. But you’ll be left to navigate those changes—not the company that closed up shop.

It can be costly, especially if you are the investor. This should be obvious as to why. Investors’ intent is to find good deals, ones that can make them money. Scams may help scammy investors, but most people want to build something that will last and make good money.

The Theranos investors thought they were going to strike it rich—and contribute to a good cause.

Theranos salved its blood analysis problem in a similar way to fake AI: they outsourced their analysis directly to other blood analysis companies, with no real way of doing it themselves. After being found out as a fake, the multi-billion-dollar company was worth pennies, and all investors lost their money.

It is harmful to the industry and user adoption. AI is a useful technology, and proper information about it should be spread around. Fake AIs are harmful to the future user adoption of AI.

It is similar to fracking and nuclear power. Both are tremendously beneficial ways of obtaining energy, but when bad engineers do a bad job of fracking and pollute the water in whole towns, or when misinformation is spread about the dangerous radiation effects of nuclear power, the progress and development of both technologies is slowed down.

A big cost to fake AIs is its cost to the user adoption of real, useful technologies.

Checking for fake AI

Here’s some ways to sniff out whether an AI is real or artificial:

- If the claim is too big. If it is too good to be true, then it likely is. If they claim they use AI to build a fully functional website for you in 15 minutes, then it might not be an AI.

- If the result is going to take a couple days to compute. That couple of days is likely the wait-time for a real person to get to your request.

- Ask the virtual assistant a logical question. Chats and virtual assistants are good at delivering information because they are trained on specific intents. That technology has come a long way, but they are still awful at performing basic computations like “What is 344 * 12?” or “If blue is after yellow, and yellow is before blue, what order are the colors in?”

Fake AI is bad for real AI

Fake AI is bad for the industry, and the future of the industry. It gets coupled in there with deepfakes and fake news articles, painting a bad image of the world of Artificial Intelligence. AI is useful, as a tool, to engineers and can be, too, in personal goods.

For companies, AI can be really useful—but for most companies’ use cases, real AI is likely too much tooling or over-engineering than is truly necessary or valuable.

But, AI is a technology that can enable a new breed of companies to provide on-demand, personal services. In the future, the benefits it grants is at a disservice with the use of Artificial Artificial Intelligence.

Related reading

- BMC Machine Learning & Big Data Blog

- What Is a Language Model?

- Top Machine Learning Frameworks To Use in 2021

- Anomaly Detection with Machine Learning: An Introduction

- 3 Simple Data Strategies for Companies

- Data Ethics for Companies

These postings are my own and do not necessarily represent BMC's position, strategies, or opinion.

See an error or have a suggestion? Please let us know by emailing [email protected].