Technology workers love metrics—maybe too much! This love of numbers, graphs, and reports too often leads to a situation that I like to call analysis paralysis. We produce and analyse numbers that just don’t really matter. We need to stop reporting just because we can and instead start producing reports that actually add value.

In this article, I’m sharing my insight on how to choose IT metrics that matter.

IT metrics in the real world

Recently, a question was posed in an online service management forum that I participate in. The question was around SLAs for problem management. Firstly, the question was wrong as SLAs are not relevant in the problem management space—problems are highly complex and we cannot guarantee resolution times. What the question really pertained to was useful metrics for problem management.

The first question we need to ask is “What is useful for our customers to understand about the performance of our problem management practice?”

The answer to this is not as easy as it would seem. In reality, the average customer doesn’t really care much about problem management: current problems should have valid workarounds, and recurrences will be dealt with by incident management.

Metrics should matter—to your customers

This question prompted a lengthy discussion with a wide range of viewpoints and suggestions. The common thread, though, was to ensure that the metrics that you do produce actually matter to your customer. Put yourself in your customers’ shoes or, better still, go and talk to them to find out what is important.

I did this exercise recently and what we came up with were a couple of simple measures:

- How long did we take to identify the problem statement?

- When did we get an effective team together to work on the problem?

These measures were all about our response to the problem management practice. Our customers just wanted to know that we were taking things seriously, understood what we were looking at, and were actively working on finding a permanent solution. With the problem identified correctly and the right people working on it, all they then wanted were regular reports on progress—not more metrics.

The challenge with ITSM metrics

Arbitrary times set for resolution of problems just don’t make sense and provide little or no value, but still many organisations report these metrics to customers, simply because they can.

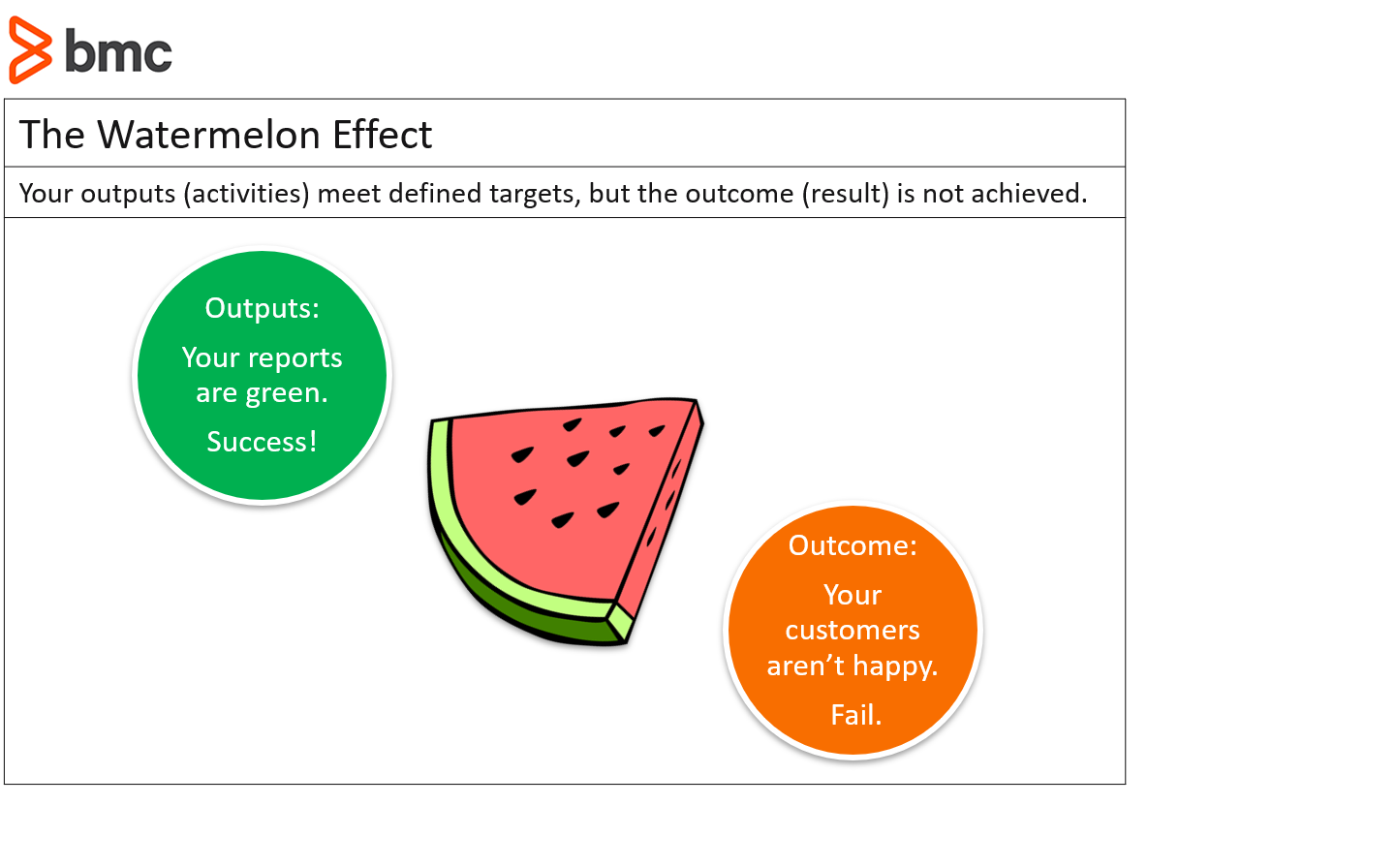

One of the biggest problems with easy to extract metrics is that they often lead to the watermelon effect—a state where the metrics you are providing to your customers show that everything is green, but the customer is still unhappy with the service they are receiving. This is because the metrics that are harder to extract, but more relevant, are in the red: just like a watermelon, it looks green on the outside, but inside it is all red.

Do your metrics show critical information?

A good example of the watermelon effect is found in one of the most common metrics in incident management, time to resolve. We will cheerfully send reports to our customers showing that 95% of our incidents are resolved within their targeted SLA time, lovely graphs all showing pretty, green traffic lights.

But go talk to the customer and find out what they really think of the service they are receiving. You may find a different response to the one you were expecting.

Imagine that your report covers 750 lower priority incidents and a majority were resolved by the service desk within 10 minutes. Sounds pretty good doesn’t it? The issue is, however, that 400 of these calls were for the same type of issue which took, on average, three minutes to resolve.

This represents 400 interruptions to productivity and, no matter how short the interruption is, this has a major impact on the value provided by the affected business unit, who in total experienced 1,200 minutes of outage during this period—that’s three working days of lost time!

Choosing the right metrics

This is a clear indication that the metrics we were producing were not sufficient to give a true picture of how well we are performing. So, what could we do to give a better view of this and provide an accurate picture of the real customer impact of IT outages? Here are a few suggestion metrics you could consider:

- Downtime by service

- Incident count by problem

- Incident count by customer

- Incident count by business unit

The simple act of having to log an incident represents a disruption to productivity, so if a particular customer or business unit is having to log a disproportionate number of incidents, even if these are not related, they are losing business value and this should raise a red flag and prompt an investigation into why this is happening. At the very least, it shows a level of dissatisfaction with IT services that is higher than other similar customers or business groups.

Best practices for choosing metrics

I know that, early in my ITSM career, we liked to produce extensive reports for management that covered virtually every aspect of our ITSM response that we were able to report on. Each month we delivered multi-page reports to the CIO. Whether they were ever read, I don’t know, but I have my suspicions that they ended up in the round filing basket, unopened.

There are very good and valid reasons for this response. By the end of the month, much of the information contained in these reports was no longer relevant, the metrics didn’t relate to business value, and they took too much effort to read and understand.

When it comes to choosing which metrics to track and report on, remember our initial question: “What is useful for our customers to understand about the performance of our practice?”

Keep that question in mind, and stick to my best advice:

- Keep it simple

- Make it relevant

- Be timely

Keep it simple

Keep regular reports to one page wherever possible. Cover highlights and exceptions only. A cursory glance at the report should tell your management if there is anything they should be immediately concerned about.

Make it relevant

Everything we report on should be related to business value. Look at what you are reporting on, is there a link through to the vision and mission of your organisation? Do the activities you are reporting on have a link to the achievement of these? If not, then you are most likely reporting on the wrong things.

Be timely

Don’t wait for your scheduled reporting times to highlight issues to IT or business management leaders. Keep them informed of issues as they occur. The last thing your management team wants is to be hit with a nasty surprise weeks after the event. Modern service management tools give you the ability to deliver real time dashboards to management. Set these up and ensure that alerting for situations that they should be aware of is configured. But don’t simply rely on them seeing these—if the situation is important, personally alert them to the issue.

I hope this has given you some food for thought about the metrics you are reporting on. The most important point I will reiterate is to make sure that you consider the delivery of business value in all reporting. This is, after all, what IT is for.

BMC for ITSM solutions

BMC Blogs has many resources on IT service desk metrics and best practices. Browse our Enterprise IT Glossary or see these articles: