Even as businesses realize the competitive advantages of moving to multi-cloud and edge deployments, major infrastructure and security challenges still abound. That’s according to a recent survey of more than 400 global IT executives by Volterra, an innovator in distributed cloud services, and Propeller Insights.

Cloud and multi-cloud

According to the survey, 70 percent of IT leaders think it’s “very important” to have a consistent operational experience between the edge and public and private clouds. On the multi-cloud front, 97 percent indicated they plan to distribute workloads across two or more clouds, with three key goals:

- Maximize availability and reliability (63 percent)

- Better meet regulatory and compliance requirements (47 percent)

- Leverage best-of-breed services from each provider (42 percent)

The benefits of multi-cloud deployments are many: better availability and reliability, geographic adherence to local regulations, and maximizing individual platform strengths. Yet, issues still remain with security, connectivity, and performance, as well as inconsistent service offerings. The IT leaders surveyed ranked the top three multi-cloud difficulties as:

- Secure and reliable connectivity between providers (60 percent)

- Different support and consulting processes (54 percent)

- Different platform services (53 percent)

Edge computing

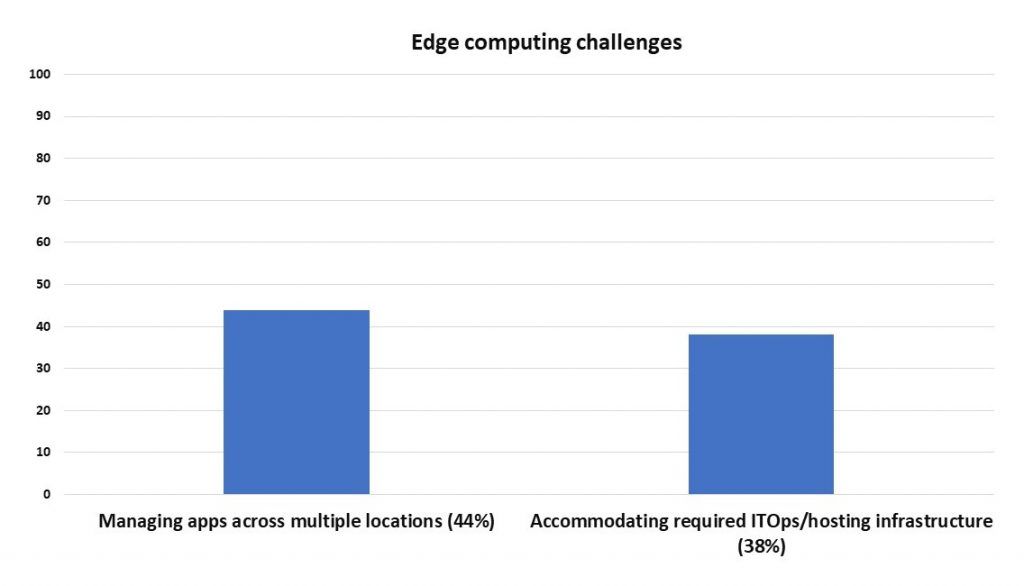

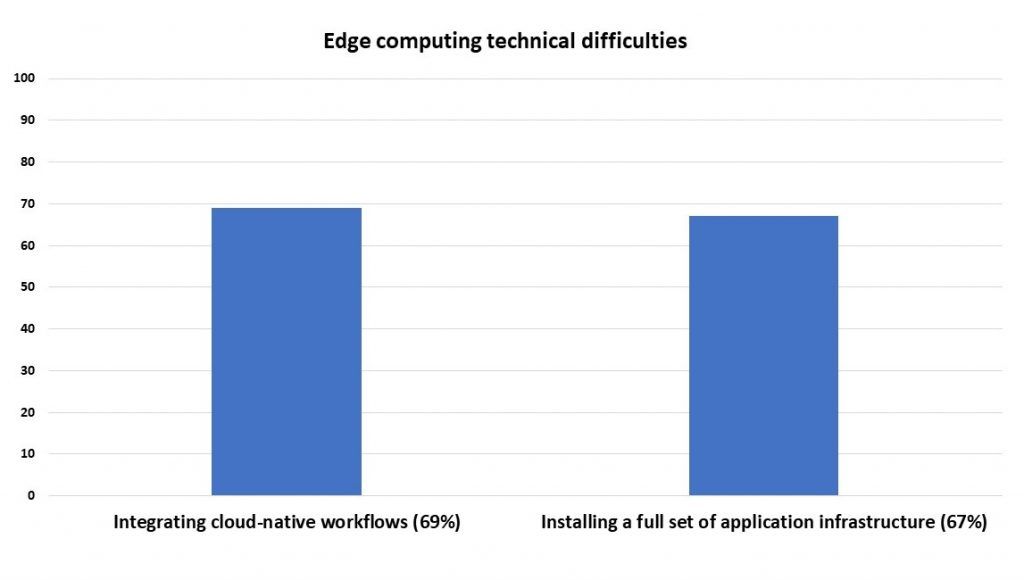

As for edge computing, survey respondents reported they were deploying apps that way to support Internet of Things, or IoT (57 percent), smart manufacturing (52 percent), and content delivery (46 percent). Fifty-four percent said they preferred edge to the cloud because they need to control and analyze data for local use cases; and 47 percent reported a preference for edge because there was too much latency when sending edge data to public, cloud-based apps. There are still some challenges and difficulties with edge to overcome, as shown in the figures below.

Separately, 37 percent cited concerns about the lack of resources or time to keep applications and infrastructure up-to-date for long-term, edge-based app deployment over an entire lifecycle, while 26 percent had issues with managing distributed clusters as siloed instances rather than a single resource.

Distributed models, such as edge computing, have always been attractive because of their ability to minimize network latency issues and put functions closer to the technology consumer. With the explosion of network-connected devices, massive data generation from the growing adoption of IoT and new compute and application architectures, edge computing makes sense today for a variety of reasons. These include the traditional issues of optimizing for network capacity and performance, but there are also new opportunities in the localized pre-processing of all data sources (telemetry, video, mobile, etc.).

Edge computing perspective

We see customers moving to include edge computing models where it makes sense, however, the distributed model of compute, applications, and data presents new management challenges for performance, control, and governance. We are encouraging customers to think about how they will extend their IT and data management processes and approaches to the edge computing world.

If you’re considering a move to edge, you should have a long-term vision and plan—including stakeholder perspectives—for your mix of datacenter, cloud, and edge resources, based on current and future use cases. Looking through this lens will help you shape appropriate technology choices not just today, but also five years from now.

Next, think about your edge applications and their distributed architectures and how you will support them from a deployment, monitoring, capacity, and service management point of view—and consider how your overall automation strategy extends to edge use cases. Finally, because edge computing has the potential to increase the “attack surface” of your IT estate, it’s very important to consider your service level needs and adopt a multi-layer security approach to help ensure you are adequately protected.

These postings are my own and do not necessarily represent BMC's position, strategies, or opinion.

See an error or have a suggestion? Please let us know by emailing [email protected].